How AI Coding Agents Call Your Tools

Inside the tool-calling loop, the MCP standard, and why your team needs access controls before deploying.

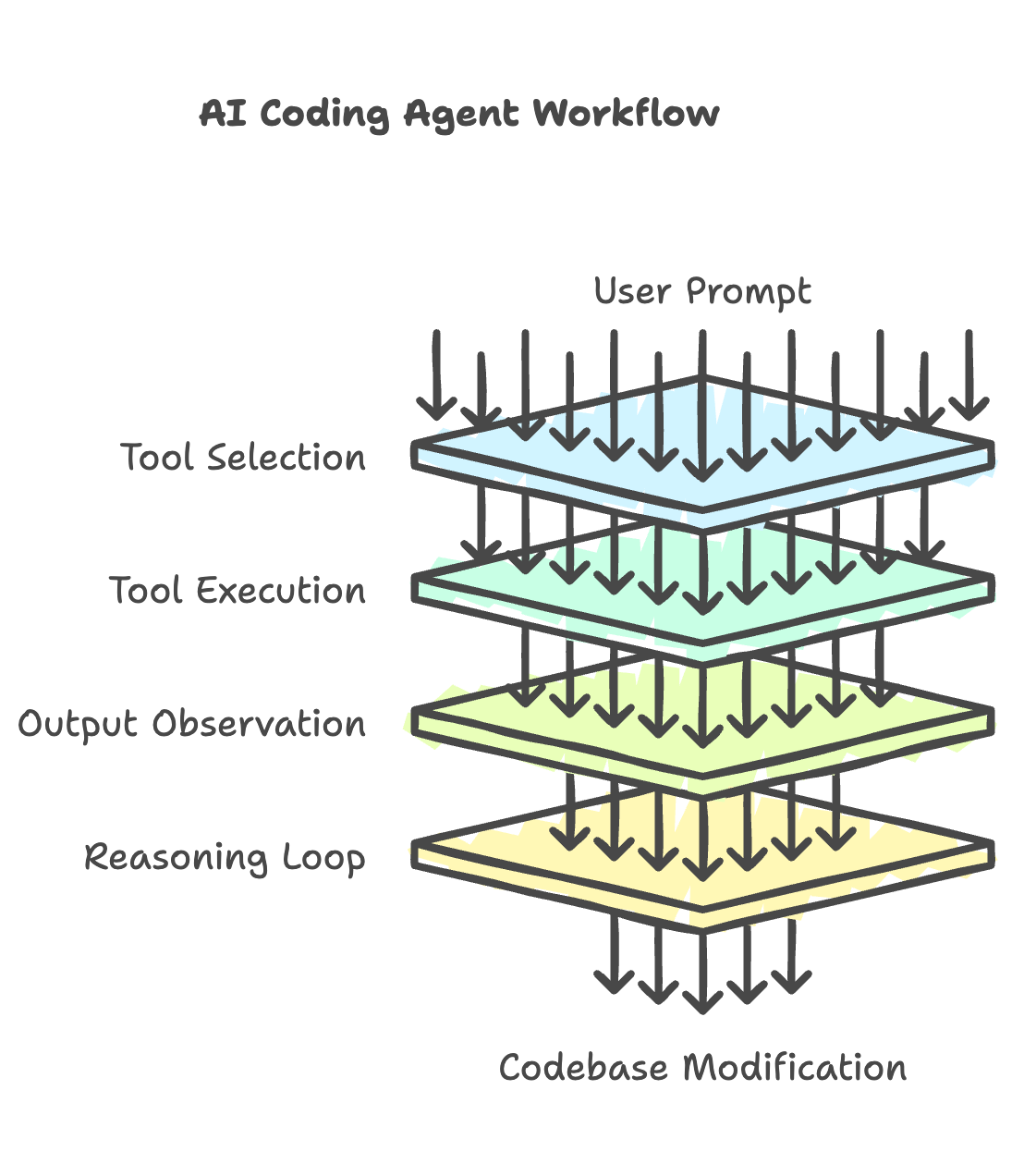

When an AI coding agent works on your codebase, it isn’t running one massive prompt. It’s calling tools. One to search your files, another to run tests, another to commit the result. Each action is a separate function call that the model decides to make at runtime.

MCP (Model Context Protocol) is the standard that connects the model to those tools. Think of it as a USB-C port between an AI model and your infrastructure. Each tool registers as an MCP server with a defined set of actions. One protocol connects the model to databases, Kubernetes clusters, CI pipelines, whatever you expose.

Here’s the part that catches most teams off guard: the agent picks which tools to invoke and in what order. Nothing is hardcoded. It reasons about the task, selects a tool, observes the output, reasons again. That loop can fire 40+ calls from a single user prompt, each one hitting real systems, each one burning tokens.

The MCP ecosystem already has 10,000+ servers and 97 million monthly SDK downloads. Anthropic donated MCP to the Linux Foundation’s Agentic AI Foundation, co-founded by OpenAI, Google, Microsoft, and AWS.

For engineering teams, this creates real deployment risks. CoSAI identified 12 threat categories and nearly 40 attack vectors specific to MCP. 5.5% of open-source MCP servers contain tool-poisoning vulnerabilities. Gartner predicts over 40% of agentic AI projects will be canceled by 2027, citing escalating costs and weak governance.

Scope tool access before you connect your first MCP server. Set token budgets per request. Gate anything that touches production behind a human approval checkpoint. The agents will use whatever you give them access to. Decide what that is before they do.